AI Face Restoration & Enhancement: Restore Blurry, Degraded, and Pixelated Faces

Numerous authoritative face restoration and enhancement papers, resources, and tutorials provide comprehensive guidance on how to use diverse methods for image and video face restoration. These invaluable resources outline detailed techniques and methodologies, catering to the needs of someone seeking to restore and enhance blurry, degraded, and pixelated faces.

However, many resources available are often too scholarly to provide practical guidance for daily life. While some video tutorials provide instructions on self-coding, they may be less practical for individuals lacking knowledge of coding.

This article strives to help more readers improve their understanding of face restoration and enhancement techniques and aims to assist individuals and groups in discovering more practical methods. It will introduce the primary technologies used in this field and provide insights on utilizing programs to restore and enhance faces in images and videos.

- What Is Face Restoration & Enhancement?

- The Main Factors Contribute to the Degradation of Facial Images

- The State of Development in the Filed of Deep-Learning Face Restoration

- Technologies (Open-Source) Used for Face Restoration & Enhancement

- The Use Cases of Face Restoration & Enhancement

- Explore the Dedicated Software for Face Restoration & Enhancement

What Is Face Restoration & Enhancement?

The concept of face restoration was first proposed in 2000, by Baker et al. They developed the first prediction model to enhance the low-resolution face images. Since then, numerous face restoration methods have been developed, from traditional to deep learning-based face restoration, from non-blind to blind and beyond. In summary, face restoration is a dedicated field within image restoration that aims to recover low-quality face images and transform them into high-quality ones.

Face enhancement is one of the branches of image enhancement, whose primary goal is to enhance the facial details of human faces, and remove noise, blemishes, compression artifacts, and glitches, thus ensuring they appear clearer and the overall quality is improved.

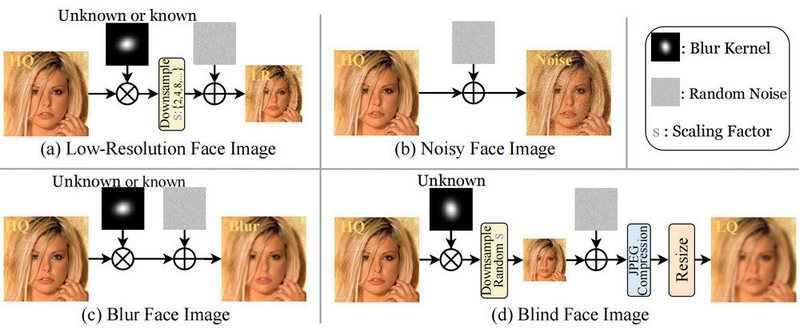

The Main Factors Contribute to the Degradation of Facial Images

Face images in real-world scenarios undergo degradation during imaging and transmission due to the complex environment. Such degradation primarily stems from the limitations of physical imaging equipment and external imaging conditions. Let's outline the key factors that contribute to image degradation:

1. Low or high light conditions.

2. The camera's performance is influenced by both internal and external factors. Internal factors include optical imaging conditions, noise, and lens distortion. External factors involve relative displacement between the subject and the camera, such as the camera shaking or capturing a moving face.

3. Lossy compression during transmission and surveillance storage.

The State of Development in the Filed of Deep-Learning Face Restoration

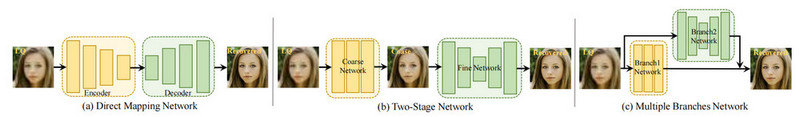

Over time, traditional face restoration methods have proven insufficient in meeting engineering requirements. As a result, a multitude of deep learning-based methods have emerged, specifically designed to tackle face restoration tasks. By utilizing the power of large-scale datasets, deep learning networks effectively capture diverse mapping relationships between degraded face images and real face images. This has led to significant advantages over traditional methods, offering more robust and reliable solutions for real-world applications. In this section, we will provide a concise overview of the developmental phase of deep learning-based techniques for face restoration.

The face restoration methods can be classified into three perspectives: non-blind, blind, and joint restoration tasks.

What is non-blind face restoration?

The non-blind method serves as the foundational approach in the field of face restoration. In this context, the bi-channel convolutional neural network (BCCNN) is introduced, effectively merging the extracted face features with input face features. Through the utilization of a decoder, this network excels at reconstructing high-quality face images, thanks to its robust fitting capabilities. The Generative Adversarial Network (GAN) prominently features as a key player in non-blind tasks, because of its ability to generate visually appealing images.

What is blind face restoration?

Non-blind methods have demonstrated limitations in real-world applications, as they struggle to effectively handle low-quality face images. Consequently, the field of face restoration is gradually shifting towards blind tasks, aiming to address a broader range of application scenarios and challenges in obtaining paired LQ and HQ images in real-world scenarios. Blind face restoration focuses on recovering high-quality faces from degraded counterparts, encompassing issues like low resolution, noise, blur, compression artifacts, and more. This approach aims to enhance the visual quality and fidelity of facial images, enabling improved outcomes across various domains and applications.

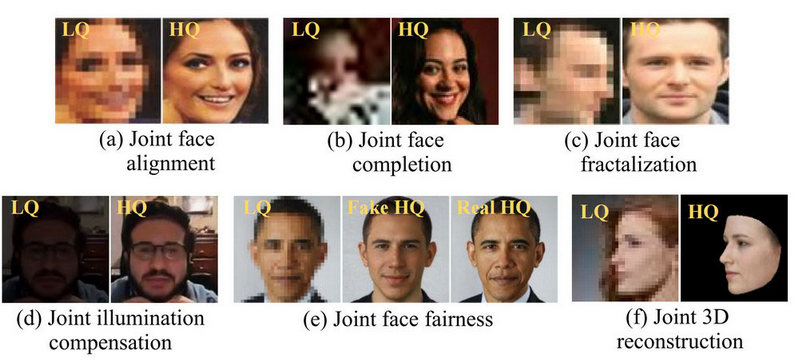

Joint restoration tasks

There are some essential components of face restoration, including joint face completion (fix occluded and low-quality faces), joint face fractalization (primarily designed for frontal faces), joint face alignment ( the use of aligned face training samples for optimal performance), joint face recognition (the restored face image aligns with the ground truth in terms of identity), joint face illumination compensation (improve the restoration performance of current algorithms on low light low-quality faces), joint 3D face reconstruction, and joint face fairness (employ suitable algorithms to mitigate racial bias).

Technologies (Open-Source) Used for Face Restoration & Enhancement

Face restoration is an increasingly prominent and evolving field that has garnered significant attention in recent years. Numerous face restoration methods have been trained and proposed to address various challenges and limitations. In response, a multitude of improved methods have been developed and progressively integrated into real-world applications. In this article, we will introduce 2 widely utilized open-source methods that have demonstrated exceptional efficacy.

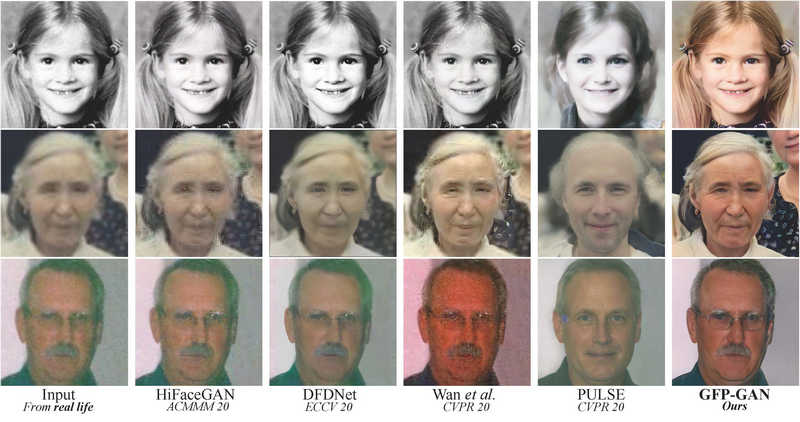

GFP-GAN

The GFP-GAN (Generative Facial Prior GAN) was developed to tackle challenges encountered when low-quality inputs are unable to provide accurate geometric prior, while high-quality references are inaccessible, limiting the applicability in real-world scenarios. This model was meticulously designed to strike a fine balance between realism and fidelity.

The GFP-GAN framework comprises two key components: a degradation removal module (U-Net) and a pre-trained face GAN that serves as a facial prior. These components are seamlessly connected through latent code mapping and a series of Channel-Split Spatial Feature Transform (CS-SFT) layers. The purpose of the degradation removal module is to explicitly eliminate degradations such as low-resolution, blur, noise, or JPEG artifacts, while simultaneously extracting pristine features. On the other hand, the face GANs possess the capability to generate highly faithful faces, exhibiting a remarkable range of variability. This diversity enables the framework to leverage rich priors, encompassing facial geometry, textures, and colors. Consequently, the joint restoration of facial details and color enhancement can be achieved, enhancing the overall outcome.

CodeFormer

CodeFormer, a Transformer-based prediction network, was devised to effectively capture the overall composition and context of low-quality faces in code prediction. This breakthrough technology allows for the identification of natural faces that closely resemble the target faces, even in cases of severe degradation.

CodeFormer, a novel approach, introduces a controllable feature transformation module to enhance adaptiveness to different degradation levels. This module allows a flexible trade-off between fidelity and quality. Accompanied by the expressive codebook prior and global modeling, CodeFormer achieves outstanding performance surpassing existing methods in terms of both quality and fidelity. It also exhibits remarkable robustness to degradation, making it a powerful solution in this domain.

CodeFormer stands out among other state-of-the-art methods for its ability to generate high-quality faces while also preserving identity even under highly degraded inputs. Furthermore, it exhibits exceptional robustness against a wide range of real-world degradation levels, including heavy, medium, and mild.

The Use Cases of Face Restoration & Enhancement

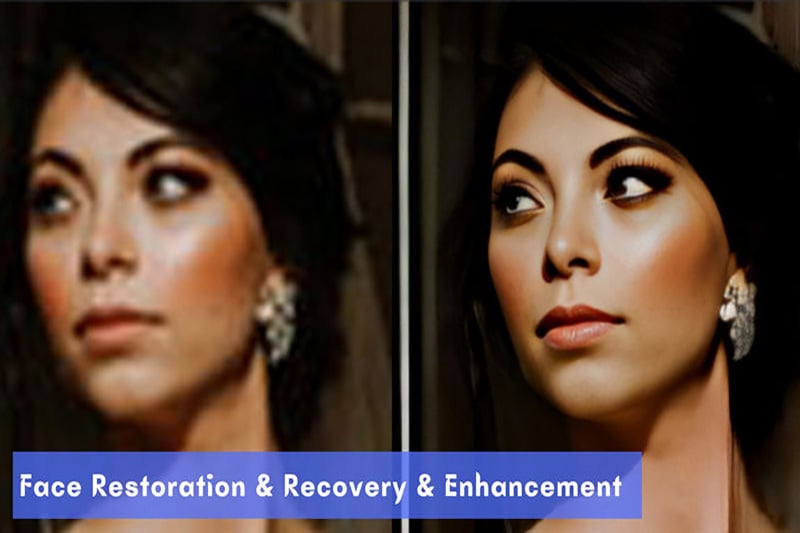

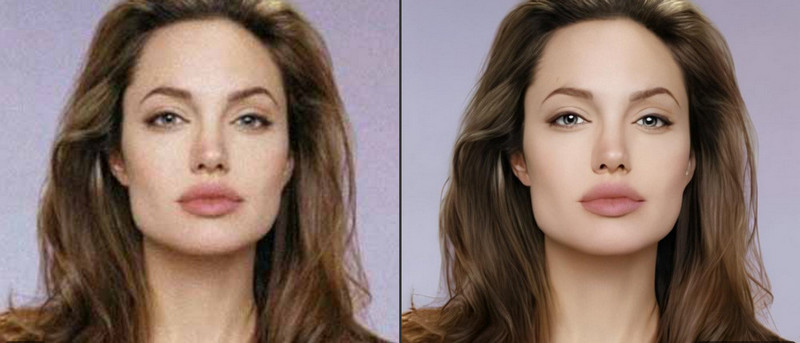

Face restoration and recovery play a crucial role in enhancing overall image quality. The factors contributing to face degradation are complex. With the field of face restoration has gained significant attention, in recent years, numerous methods, tools, and models have been developed and trained to address the intricate challenges of face degradation and effectively fix various quality issues in face images or videos. The magic of face restoration and enhancement can truly be seen through the following captivating examples. Let's check them out.

1. Restore and recover extremely blurry and nearly invisible faces.

2. Remove pixelation from face image and sharpen to make it clearer.

3. Recover details from low-res images.

4. Remove noise and enhance the facial details to make it stunning.

5. Restore out-of-focus faces.

6. Remove blurs and blemishes to beautify the face images.

Explore the Dedicated Software for Face Restoration & Enhancement

As the technologies mentioned above limit the practical application in daily life, you may wonder if there is any dedicated software that can be used for face recovery and enhancement. Actually, most photo editing software and photo enhancement tools are capable of recovering and enhancing face images, so we won't go into all the details of the examples here. In this section, we focus on a specialized video enhancement software that stands out from its rivals as its exclusive technology of face recognition, face restoration, and face enhancement.

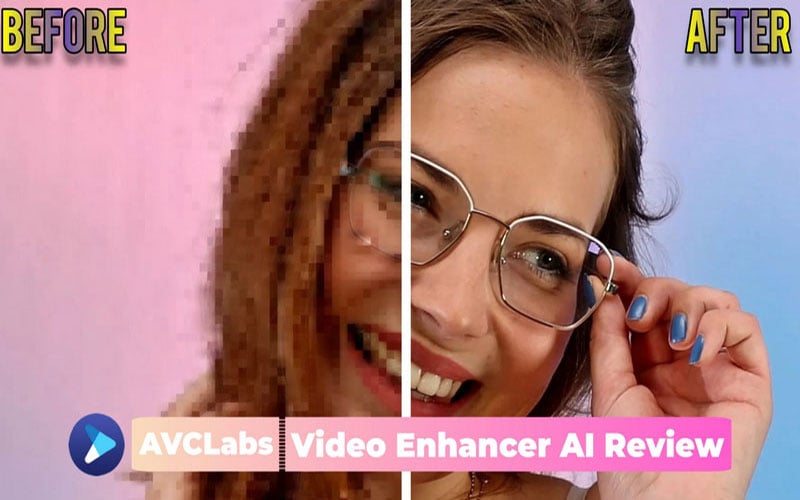

When referring to AVCLabs Video Enhance AI, most users will think about its face enhancement feature. Let’s examine customer reviews regarding this feature for a better understanding.

"Facial refinement or recovery in Video Enhancer is again a fairly straightforward process, simply click on the facial refinement button and you’re done. The AI searches the video and refines or recovers any faces or facial features it can find in your footage." -- Gavi from Trustpilot

"You people weren't kidding. The face enhancement tool is unbelievable. I'm still in shock. Before last night I would have thought this was impossible but damn. From almost blurred beyond recognition to being able to see the creases in lips on magnification. That is some crazy stuff, call me a believer." -- James from Email Ticket

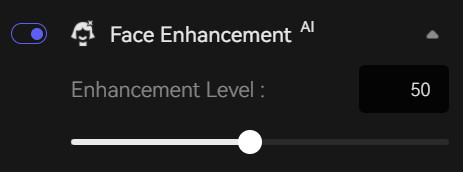

The face detection, face recognition, and face enhancement models are independently developed and trained by AVCLabs. The AI face recognition models combine deep-learning-based face recognition, Pose Detection, and Re-Identification, which play critical roles in face restoration and enhancement. From V3.3.0, AVCLabs improved its face enhancement model which allows users to adjust the enhancement intensity from 0-100, thus making it adaptive to video sources in different resolutions and achieving the balance between quality and fidelity (enhance face image or video in best quality, as well as faithful to the original footage).

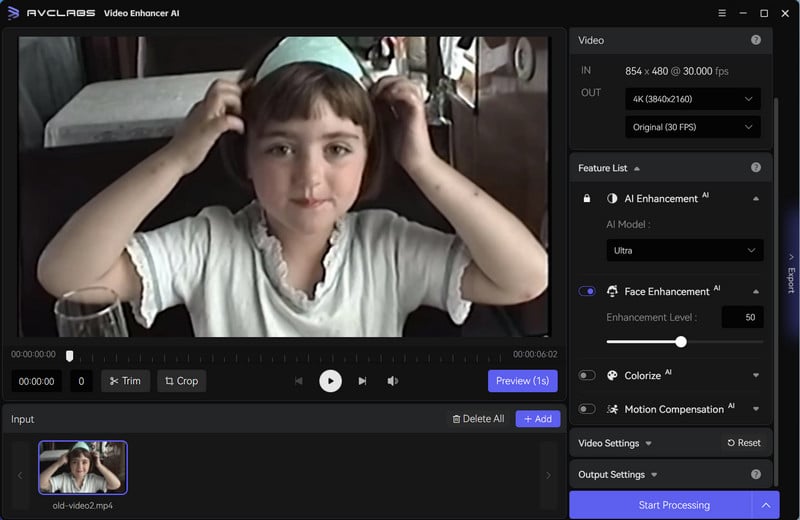

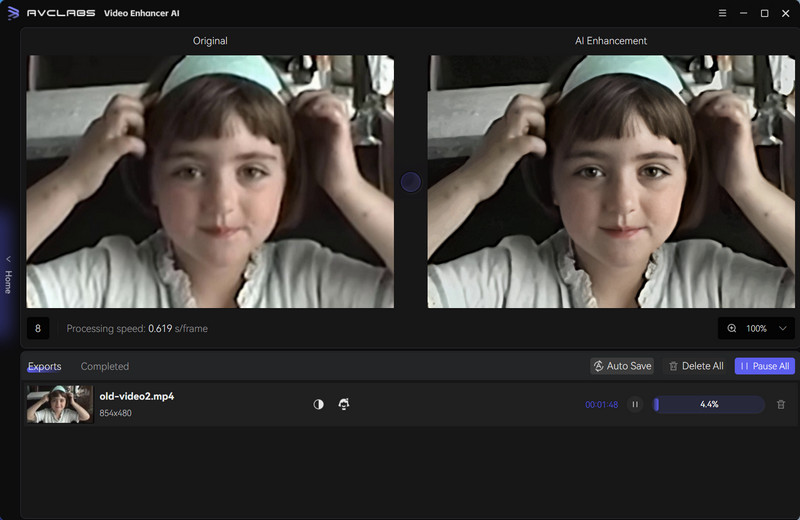

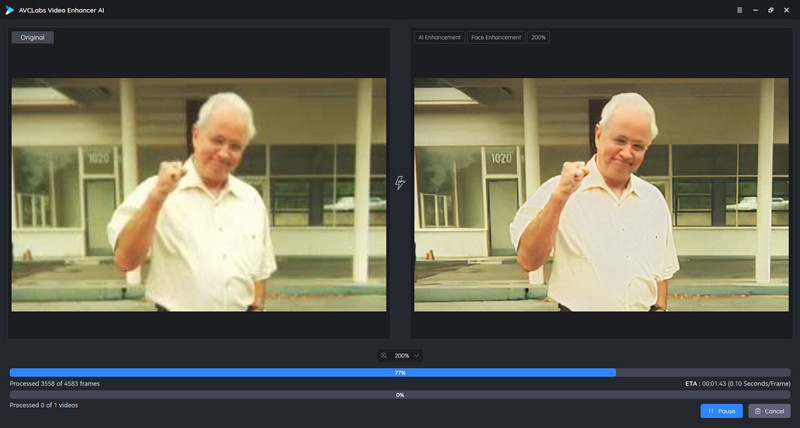

Let's look at how AVCLabs Video Enhancer AI restores degraded faces and recovers facial details from blurry, pixelated, or noisy video footage.

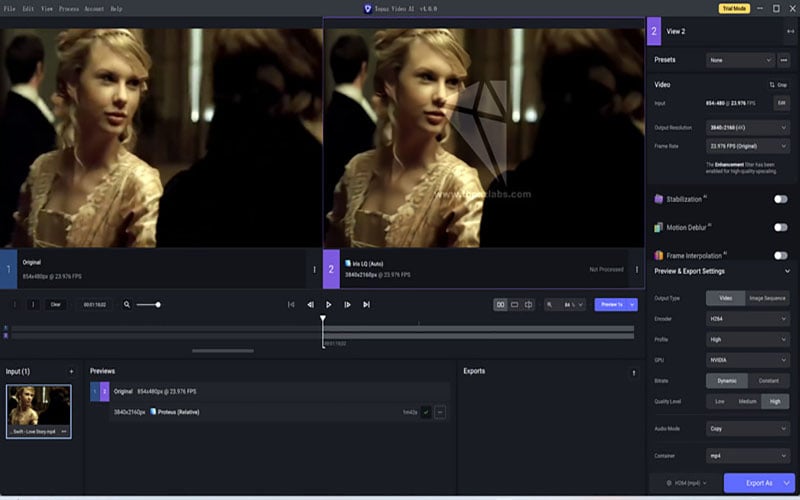

Step 1: Import the face videos into AVCLabs

To begin, please download the .exe file for Windows PC or the .dmg file for Mac. Afterward, run the installation file to initiate the AVCLabs Video Enhancer AI installation process. It is advisable to ensure that your Windows PC is equipped with a dedicated NVIDIA GPU, while your Mac should have either the M1 or M2 chip.

Once installed, launch the application and proceed to import face videos.

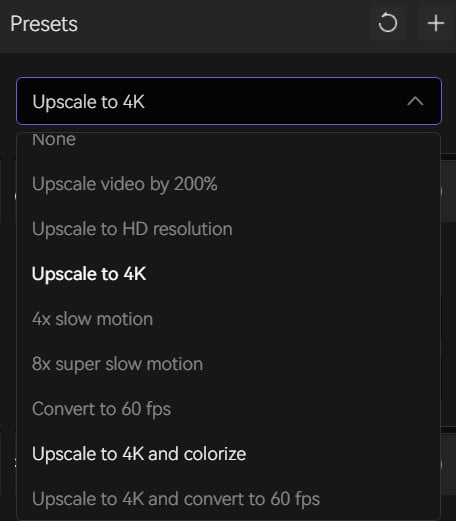

Step 2: Select the AI model and adjust the intensity of face enhancement

Navigate to the top right corner and select an AI preset you prefer from the Presets list. For both faster video rendering speed and superior quality, we highly recommend choosing the "Upscale video by 200%" option.

Generally, we pick 45-50 as the face enhancement level, to ensure the most natural looking and reduce the impressions of cartoonlike and CG effects. However, if the source video has severely blurry or out-of-focus faces, you can consider increasing the enhancement level to 70 or higher.

Here we imported a music video in the resolution of 504x284 and selected "Upscale to 4K" as the preset with the Face Enhancement Level set as 45.

Step 3: Preview and start processing

After making the adjustments, you can click the "EYE" icon to preview the preprocessing effect of the 30 frames. If the result is not satisfactory, simply exit the preview and continue fine-tuning. Once you achieve the desired result, stop the preview and initiate the rendering of the final video by clicking the "Start Processing" button.

Video Tutorial: AVCLabs Video Enhancer AI V3.3.1: Face Enhancement Tool Improved

Final Thoughts

Face image and video restoration have been attracting significant attention, aiming at reproducing a clear and realistic face image from the degraded input. Nowadays, tremendous advances have been made in this field, with various technical approaches being employed for practical applications. AVCLabs is one of the organizations that devote more energy to face recognition and enhancement technologies. If you want to recover low-quality (LQ) face images and videos into high-quality (HQ) ones, do not miss out on the chance to experience the incredible capabilities of AVCLabs Video Enhancer AI.